Purpose Beyond Productivity

There's something quietly ironic happening in the world of AI right now, and I think about it a lot.

The pitch has always been: AI is going to do more for you, so you can do less. It's going to handle the repetitive stuff, reduce friction, solve problems faster, and give you your time back. That's the promise. And in many ways, it's a real one.

But look around. Especially in places like Silicon Valley, or really anywhere people are close to this technology, what do you actually see? You see people running harder. Longer hours. More urgency. More output. An arms race where the tool that was supposed to create slack has instead become fuel for acceleration. Everyone wants to be first. Everyone wants to be ahead. The same dynamic that has always driven competition is now amplified by tools that increase the speed of execution.

The result is a paradox: the technology that was supposed to liberate us is, at least for now, intensifying the very pressures it promised to relieve.

Faster tools, faster pace. The wheel just keeps spinning.

And the data backs this up. Workday's 2026 research found that 37–40% of time supposedly saved by AI gets immediately eaten by reviewing, correcting, and verifying AI-generated output. You save an hour drafting something; you spend 25 minutes fact-checking it because you can't fully trust it. There's even a term emerging for this phenomenon: "workslop", low-quality AI output that creates more cleanup work than it saves. Meanwhile, 89% of managers reported no measurable change in productivity despite rising AI adoption across their organizations. The machines are busy. The needle isn't moving. This isn’t because AI doesn’t work. It’s because productivity is becoming easier to generate, and harder to measure meaningfully.

For decades, productivity functioned as a reliable proxy for value. More output meant more progress. But as the marginal cost of producing content, code, analysis, and decisions trends toward zero, that proxy starts to break. When output is abundant, it stops being a differentiator. And yet, most of the current narrative around AI is still anchored in exactly that: more output, more efficiency, more growth. We are applying an extraordinary technology to optimize a metric that is quietly losing its meaning. Somewhere in that loop, the human gets squeezed.

The identity problem nobody's talking about

Here's where it gets more interesting, and more personal for me.

For much of human history, people found meaning and identity in two primary places: community and faith. Church, or the equivalent in other traditions, gave people not just a belief system, but a sense of belonging, ritual, and purpose that extended far beyond daily work. Then, gradually, for a lot of people in the Western world, that began to fade. And something else took its place.

Work.

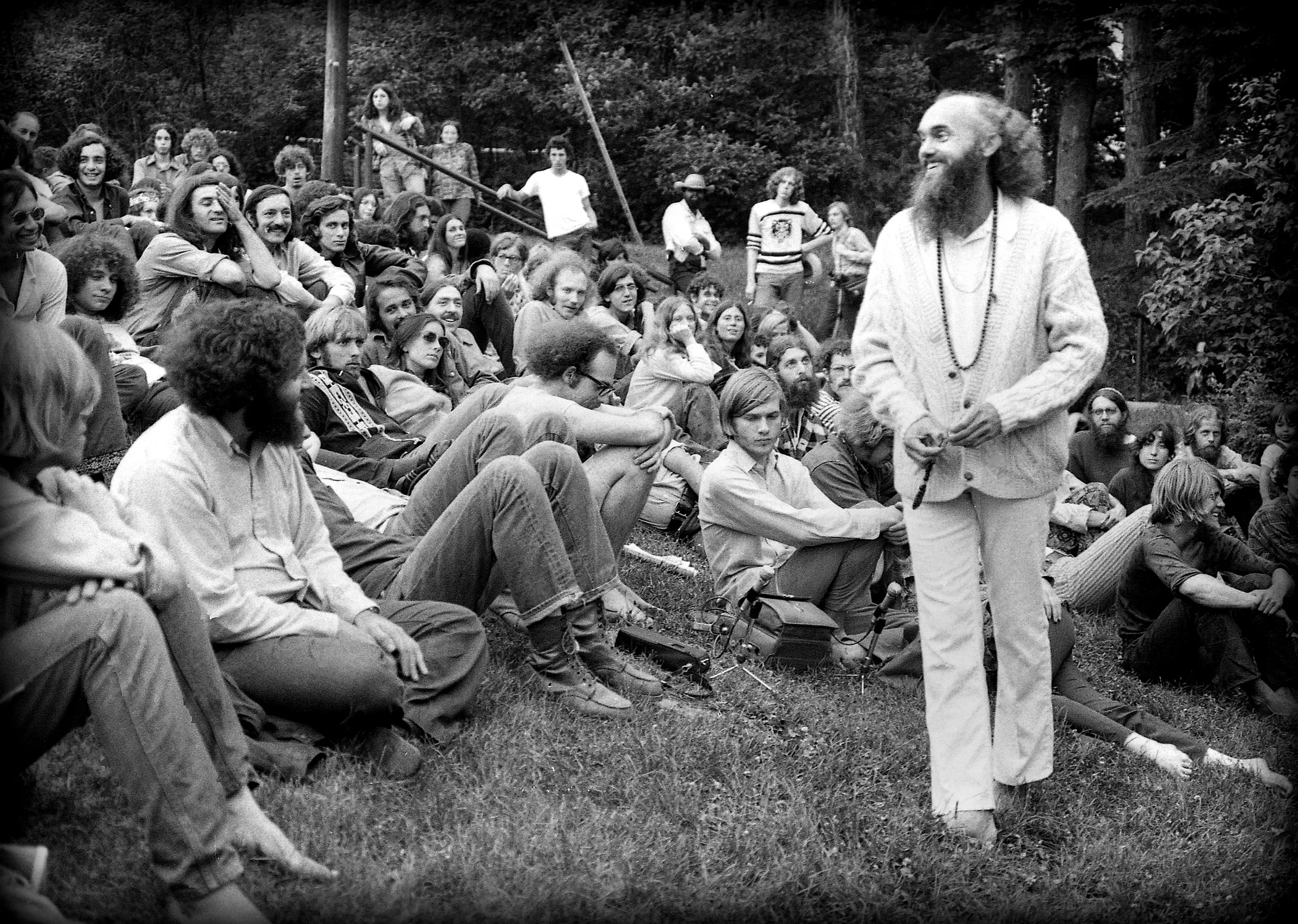

As Ram Dass, the Harvard professor turned spiritual teacher, would put it, our jobs became our somebody-ness. The question "what do you do?" became a proxy for "who are you?" Your title, your company, your output, the scaffolding of identity. And for a lot of high achievers, that scaffolding is load-bearing. Remove it, and something much deeper starts to shake.

Ram Dass, born Richard Alpert, Harvard professor turned spiritual teacher, spent his life asking the questions productivity culture never makes room for. Who are you, beyond what you do?

Now enter AI. Not just as a tool, but as something genuinely capable of performing significant portions of what many people do for a living. Economists and researchers have started using a term for what's emerging: the AI precariat, a growing class of workers in a state of precarious, AI-disrupted employment. The numbers are stark: an estimated 980 million jobs worldwide face high disruption risk, and 41% of employers intend to reduce their workforce by 2030.

But the more important, and under-discussed shift isn’t economic. It’s existential. Because what’s being disrupted isn’t just income. It’s identity. As the World Economic Forum noted, the loss of meaning and purpose tied to work is “a significant but often overlooked consequence” of AI-driven transformation. So the real question isn’t just: Will AI take jobs?

It’s:

What happens to identity when productivity is no longer required to prove your value?

The organizational blind spot

From an organizational standpoint, the conversation around AI is almost entirely focused on efficiency. And it makes sense. The business case is clean. Map tasks. Identify automation opportunities. Model cost savings. Reduce headcount. Present the slide.

But what that analysis almost never includes is the human side of the equation. Not in terms of performance, but in terms of meaning.

When you automate a significant portion of someone's role, or eliminate it entirely, you're not just changing a job description. You're disrupting someone's sense of contribution, their daily structure, their professional identity, and in many cases their financial security. That's not a rounding error. That's a profound transition, and most organizations are completely unprepared to support people through it.

MIT Sloan Management Review put it plainly in a recent piece: "The gap between what HR currently is and what it could be has never been wider." There are two paths. One leads to a weakened HR function, more automated, more reactive, more focused on headcount efficiency. The other elevates HR into something more important: the architect of human transformation inside the organization. The uncomfortable truth is that most HR teams are currently on the first path without fully realizing it.

Two paths. Most organizations are already on one of them , they just haven't noticed yet. Photo by Caleb Jones on Unsplash.

This is where the real work begins. Not just: How do we reskill people?

But:

How do we help people reinterpret their value?

How do we support identity transitions, not just skill transitions?

How do we create environments where meaning doesn’t collapse when roles change?

These questions are harder. They don’t fit neatly into workforce planning models. But they will determine whether organizations emerge from this transition with cohesion, or fragmentation.

What fills the void?

And if that's true inside organizations, it becomes even more significant at the level of society.

When large numbers of people lose not just their jobs, but their primary source of identity and daily structure, the impact extends far beyond employment metrics. It becomes a crisis of meaning. We’re already seeing early signals. between 2022 and mid-2025, AI companion apps surged by 700%. Apps designed to alleviate loneliness, provide emotional support, simulate human connection. Research suggests they work, to a degree roughly comparable with real human interaction. Which, if you think about it, is both impressive, and deeply concerning. Because it suggests the connection gap isn’t hypothetical.

It’s already forming. And we’re trying to fill it with more AI. That, I think, is the wrong direction. Humans are fundamentally social. Our biology was shaped in environments of tribes, shared rituals, and face-to-face interaction. And over the past few decades, through screens, social media, and increasingly digital lives, we’ve drifted away from that. More connected and more isolated at the same time.

AI has the potential to accelerate that drift. Or reverse it.

This is where I think the real opportunity lies, and it's one that the most forward-thinking organizations are starting to recognize. The shift isn't just from AI to more AI. It's from AI to EI, emotional intelligence. As machines absorb more of the execution layer, what remains distinctly and irreducibly human becomes more valuable, not less: judgment, empathy, creativity, trust, the ability to sit with someone and understand what they actually need. These aren't things AI can replicate. And they also happen to be the things that make life feel meaningful.

If we're willing to look up from our screens long enough to notice, there's an invitation here. To come back to something older and more essential, community, conversation, presence, each other. Not as a rejection of technology, but as its complement. If AI does what it promises and genuinely absorbs much of the execution layer of work, then what opens up is something we haven't had in a while: space. Space to ask what we actually want to do with our time, our energy, our lives.

That, to me, is the most exciting possibility of all. And it has nothing to do with productivity.

This is what we're wired for. Long before productivity metrics and job titles, meaning lived here, around a fire, with other people.